- HOME

- VENUE

- RSVP

- REGISTRY

- CONTACT

- Fires pacific northwest

- Vault 101 jumpsuit fallout

- Hasleo ntfs

- Digital media kit 2019 premiere show

- Bittorrent for mac saved torrent

- Tab notes free for window

- Unbeatable chess ai

- Easy writer andrea lunsford

- The flaming lips my cosmic autumn rebellion

- Install openjdk 11 on windows 10

- Elizabeth zoom video

#Unbeatable chess ai series#

This gives us eval paired with position (computed from the series of moves). Here is the text of a single PGN file record: 1.

#Unbeatable chess ai portable#

pgn is “ Portable Game Notation” which is a text based format for encoding chess games by as a series of moves in Algebraic Notation.

This seems sufficient for creating our initial dataset. With the average chess game lasting around 40 moves, 80 positions (each side gets a turn per “move”), July should contain 441,600,000 non-unique positions.

In the notes section it mentioned a very important detail: “About 6% of the games include Stockfish analysis evaluations” which in this case would be 5.5+ million games. Lichess hosts downloadable monthly shards of every game played on the website. I play on because it’s free, open source, and run by developers. Now that we planned out our inputs, outputs, and general model concept, lets start building a dataset. Together this input data forms a string of 808 bits (1s and 0s) that can be converted to floats and inputted into the model directly. The input, representing the board position, can be encoded using bitboards (64 bits, one for each square on the chess board) for each piece type and a few remaining bits for move index, color to move, and en passant square. The output, eval, can be represented by a single float based neuron. Overall the eval function takes in a position and returns an evaluation score. Neural networks can substitute for these handcrafted algorithms and return the eval directly eliminating the need for specialized coding. These direct measurement, combined with iterative deeping alone produce super human results. Traditionally the eval function was implemented by handcrafted algorithms measuring concepts like material imbalance, piece mobility, and king safety quantitatively. Raw material isn’t the only thing that determines the eval, positional information and future moves are also taken into account. If black is up a knight the eval will be -3. For example if white is up a pawn, the evaluation will be +1. The eval is a realitive strength measurement denominated in pawn equivalents. This score is called an evaluation, typically shortened to eval, and the eval function is at the heart of chess engines. How does an engine determine if a position is good? Given a position, engines return a score of how good the position is from white perspective. Lets try it! And train a neural net using supervised machine learning with the dataset coming from existing engines.

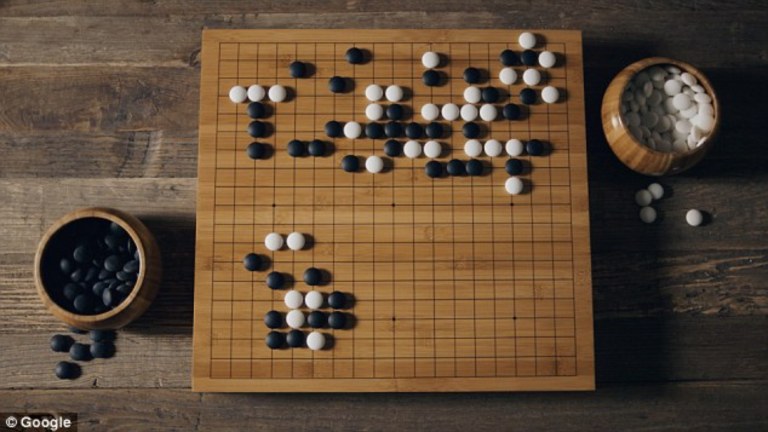

#Unbeatable chess ai free#

This prior knowledge can be used to bootstrap the learning process and make it possible to train a chess AI with very limited cost (or free on Colab). Today’s engines can already tell the difference between a good position and bad position. While obviously effective, self play is incredibly inefficient from a cost perspective. AlphaZero trains entirely through reinforcement learning and self play to avoid outside dependencies. New developments in the space such as Leela Zero (an open source AlphaZero implementation that happens to use my chess lib) and Stockfish NNUE (efficiently updatable neural net reversed) show neural nets will continue to dominate chess for the foreseeable future.Īs a chess enthusiast and AI practitioner, I set out to create my own chess AI but was discouraged by a daunting rumor: AlphaZero cost $35MM to train. In 2017 AlphaZero, an iteration of AlphaGo targeting chess, mesmerized the chess fans by handlely defeating Stockfish. Photo by Michael on Unsplash Introductionĭeep Learning has taken over the computer chess world.